What computer use unlocks

Coding models and harnesses are great at executing on a user's intention when the only interface needed is code. But one limit to long-horizon agents working is removing exactly what the human is usually doing: verifying the model did what it said it did, and choosing the correct corrections and next steps. Models should be able to self-verify and self improve: but they will require more than just raw coding to do so.

For models to do everything that someone does every day, it needs to sign into any account in the browser, interact with visual content like CAD or drawing, and learn arbitrary multi-app mutli-turn desktop workflows: it needs to be able to control the mouse and keyboard, quickly. But computers and browsers have spent decades protecting against exploits by walling cross-application interaction to only be accessible via clicks and typing, languages that models are still working on mastering and still need great harnesses for.

Existing screenshot-based computer action models are hard to use primarily due to speed and accuracy: every 6 seconds, one action is recommended, and recognizing mistakes + recovery is difficult. We've spent the last 2 months building a better computer use 'brain' that works across macOS, Windows, and Android, and harnesses to go with it.

Personalization, Speed, and Accuracy

There are many approaches to computer use. We think the ideal system is a combination of per-user specialized learning, harnesses with existing models optimized for accuracy, and custom video models optimized for speed. To optimize computer use tools in the real world, they need to work for 1) workflows specialized to each individual person, 2) overcome any lock-in the user has with their existing agents, and 3) optimize for speed and accuracy.

To specialize computer use agents to each user, our plan is to have the user enable continuous screen recording. From their daily activities, our goal is to learn habits and custom trajectories. We explored distilling these via RL, distilling into detailed skills, and per-task LoRAs that specialize a computer use model's execution to a specific user's workflow.

To optimize for speed, we optimized the details. For instance, we use quick, learned heuristics to learn when to send screenshots and not, when to use a fast video action model vs a language model, and multi-model routing based on intelligence. To optimize for accuracy, we used auxiliary signals (self-reflection, AXTree ground truths, user trajectories) to aggressively un-learn outdated website setups and rapidly learn new ones. We are actively working on the next step: a pipeline that lets us do so automatically by spinning up hundreds of parallel sandboxes to learn how to navigate new websites.

We built two systems. cuagent handles desktop (macOS and Windows). AgentRelay handles iOS, Android, and Apple platforms. They share a core philosophy: parallelize everything, verify locally, and never depend on a single model.

On machine

Thin client

Runs on the target machine. Captures screenshots, stream compressed diffs to the server. Executes returned actions locally via platform-native APIs, and do rapid verification and tree 'pruning'.

On cloud

Reasoning service

Runs OCR racing, element map building, multi-model LLM racing, planning, and reflection. Clients upload tile diffs or codec diffs for fast frame reconstruction.

Desktop clients only handle screenshot streaming and action execution + verification, and delegate most reasoning to a shared service.

Low Latency Harnesses

The main issue with computer use is that it doesn't use my computer faster than I do. Beyond simple optimizations like multilayer reasoning (i.e. fast model acts while slow model plans and occasionally injects guidance), we need to parallelize planning and execution in a deeper way.Branching tree search for planning

The biggest gains from for latency is adding conditional parallelization to action planning. Current agents plan linearly: one action, observe, one action, observe. Our idea was tree-based planning, where the agent explores multiple possible action paths in parallel, evaluates which branch best matches the goal, commits to that node, then branches again from the new state. When the UI changes unexpectedly, the tree collapses and re-expands from the current reality. This lets us plan many steps ahead without being fragile to mid-plan surprises.

What does this latency mean in practice? If you feed, say, the last 1 second of video data into a model that makes a prediction in 500ms, your harness is just wasting 500ms. But, in the spirit of speculative decoding, you can try to predict all possible *next* 1 second possibilities. Let's say running that video generation inference also takes 500ms each. Then, you can feed all of them into your prediction model *while* that 1 second is happening, and generate several plans. Then, at the end of that second, your problem becomes *determining which world you are in* and immediately taking that action, not waiting to predict the action anymore.

Before expending inference effort on this full video action idea, we wanted to validate this first. The quickest implementation of this is to only use language models: all the state predictions are defined with text (i.e. either I see X typed, or I see screen Y, or I see a popup), all the validations are text checks (i.e. did X get typed), and all the actions come from a text-only or screenshot-only prediction. To keep latency variance low, we run several action prediction models in parallel (i.e. 3.1 Flash Lite, Claude Haiku 4.5, our fine-tuned Qwen 3.5), and take the first valid JSON response.

Verification Gates

To rapidly verify action success, we run a local OCR-only verification check. This takes under 200ms and avoids the agent from going too far off the right trajectory.

step 1: click btn_compose

verify: text_visible "New Message"

step 2: type inp_to "alice@example.com"

verify: text_visible "alice@"

step 3: click btn_send

verify: text_not_visible "New Message"

Verify gates check simple conditions: is this text visible? Does this element exist? Is the right app in the foreground? Is the keyboard showing? We pay the cost of a single OCR, not a full LLM forward pass.

Now, instead of multi-step execution being the standard screenshot-action-screenshot loop that costs 3-8 seconds per step, we can chain 3-10 possible actions per iteration. The verify gate catches failures immediately. If step 3's verify fails, we break, re-capture the screen, and re-plan. Tasks that take standard agents 15-20 reasoning model calls (at 5 seconds each) take us *more* model calls and tokens total, but less end to end latency.

<250ms

OCR + Verify gate latency

3-5

Tree depth per iteration

3-5

Choices per layer of the tree

Frame Streaming

The latency for upload, even of a 800KB JPEG (an average compressed full screen), on a standard 1 MBPS upload home connection, is about 0.8 seconds -- this kills both OCR latency and LLM inference time. Instead, we have the client continuously stream compressed frames to our server at 3 frames per second, even if LLM inference is only happening at one frame every 3 seconds. When we need to actually process it, we only need to upload the latest XOR, allowing us to rapidly re-render the full frame on the server. Compressed diffs (mostly 0s) are only ~20KB per frame.

Screen to Text Translation

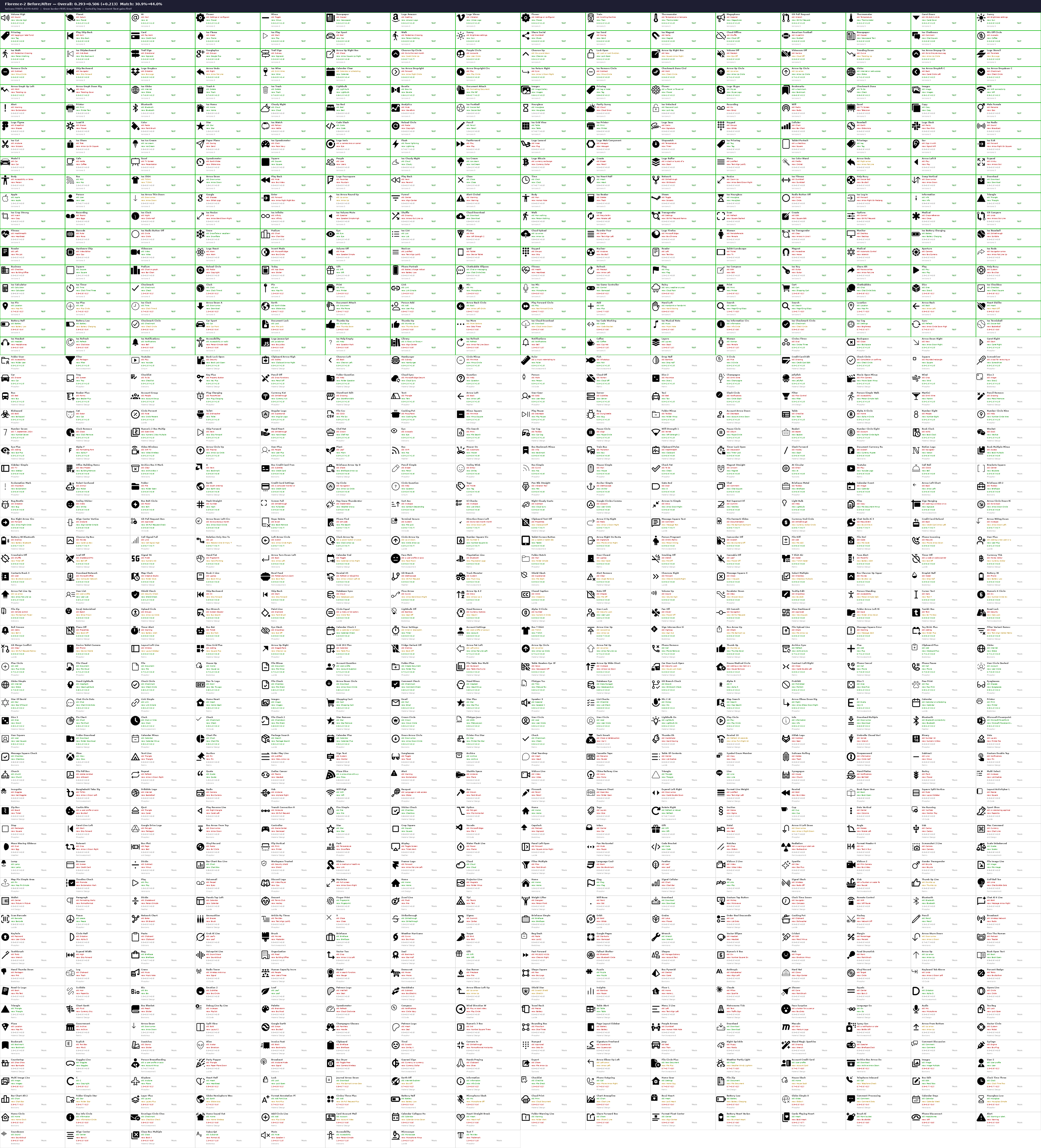

If we want to prototype what this rapid action video model looks like with a text model, we need to convert the current on-screen images to text rapidly. Existing harnesses rely on different things here, including accessibility trees or screenshots. We think that accessibility tree data for prediction is an unreliable crutch, as that data may not always be available (when it is, we can use it as ground truth data to fine tune on). With good OCR, we can give the LLM semantic IDs like btn_save with pixel coordinates as a substitute for raw screenshots. To do this, we fine-tuned Omniparser V2 to work on icons extremely well, and to identify which elements are clickable. You can see the results below.

Custom video models

Frontier APIs are the default for text input, but they're not always the right tool. For latency-critical grounding or action prediction on dense visual environments such as CAD, design, navigation, or video editing, we train our own rapid action video codec models and swap them in when we need tighter control over speed, cost, or behavior.

We built a multi-turn computer use agent with a OneVision video encoder that takes in the codec diff of the last frame, a Qwen 3 reasoning layer, and a lightweight action decoder. The vision encoder is frozen; we train LoRA adapters (rank 64, targeting all attention and MLP projections) on trajectories labeled by a more intelligent model, across Ubuntu, Windows, and macOS. When the task is well-scoped and latency matters more than raw reasoning power, we swap the harness from the frontier models to our custom video models and can cut inference latency by a factor of 5.

Training data comes from our own screencap.sh tool: we record labeled desktop sessions, extract frames at 4-10 FPS, and generate per-frame action supervision with click coordinate heatmaps. The codec-style sparse patch selection from the OneVision encoder means we process dense frames with sparse tokens, keeping inference fast even on video input.

This decoder handles the cases where you don't need reasoning, you just need to know where to click next. It runs alongside the main harness as a fast fallback: if the element map is rich enough and the next action is obvious, the decoder resolves it in milliseconds instead of waiting for an LLM round-trip.

On-device mobile agents

Every Android agent we saw either ran on an emulator or had the phone tethered via ADB and allowed ADB commands, which we thought was cheating. We wanted our system to be able to run with no setup and untethered, on a new Android phone. AgentRelay runs entirely on the phone, for both Android and iOS. This is how we expect real users to use mobile computer use: they'll tell their phone to do something (likely with voice) and put it down. Our floating bubble overlay lets you launch a task, watch the agent work via a transparent status overlay, keeps the screen on, and the user can intervene if needed by just tapping.

INSERT DEMO HERE

What's next

Integrating with Coding Agent Harnesses

So far, coding harnesses can't see a screen, click a button, grab an API key, or navigate a form. The inability to manipulate a screen is a big reason why they can't do end to end tasks. We think computer use will become a tool call when your harness needs it.

Massively parallel trajectory generation

Supervised training data for computer use is expensive to collect because it requires human demonstrators. We're building infrastructure to generate it synthetically at scale. Thousands of forked VMs, each running a headless browser, randomly exploring websites: clicking links, filling forms, navigating menus. Every session is recorded as a frame sequence with pixel-level action labels. After collection, we retroactively label these trajectories with task descriptions and success signals, producing training data without any human in the loop. The key insight is that random exploration with retroactive labeling is cheaper per trajectory than directed human demonstration, and scales horizontally with compute.

One-shot grounded reasoning

Current computer use agents separate perception (detect elements, build an element map) from reasoning (decide what to do). This pipeline adds latency and loses spatial context at every handoff. We're training models that ground directly: given a screenshot and an instruction, output the target coordinates and action type in a single forward pass with chain-of-thought reasoning embedded in the generation. No element map construction, no separate OCR step, no coordinate post-processing. The model learns to attend to the relevant region and reason about it simultaneously.

Self-reflection

We built a self-reflection mechanism that allows the agent to review its own actions and decisions. Every time the agent runs on an app and screws up, it notices and learns a skill to prevent it. Every time it succeeds two steps in a row, it learns a skill to chain them in the tree in the future. Over time, the agent gets better.

VM Forking

To do GPRO style online RL, we can use super optimized Ubuntu forking to immediately fork and rollback if system state is entirely contained within the VM (i.e. not on an external database). Training this on open source repos and desktop apps will allow us to do RL on our agents.

Video generation and comparison

We want to use a fast, near-realtime video generation algorithm to predict the all the possible evolutions of the state. This will let us compare the predicted possible states to the actual state, and correct in real time.

Sample-efficient imitation learning

The hardest tasks aren't in the training distribution. Niche enterprise software, internal tools, and domain-specific workflows will not appear in our synthetic trajectory data unless we obtain it from enterprises. To learn those quickly, we want a rapid imitation learning pipeline where a human demonstrates a new task once, and the agent generalizes from that single demonstration. The system records the demonstration as a trajectory, and optionally creates augmented training variants by changing the fields filled in i.e. form entry variants. One demonstration produces hundreds of training samples. The goal is to move from "train on millions of trajectories" to "show it once and it learns," closing the gap between general-purpose agents and task-specific automation.

We're hiring engineers who want to work on difficult systems, data collection, and training the best models. If you're interested in building the systems layer for autonomous computer agents, reach out at aayushg@mit.edu.